ChatGPT: The Future or a Fad?

OpenAI's newest development is touted as a game-changing program that will shift the focus of technological development closer and closer to AI dominance.

ChatGPT is the latest rage. Techies around the world are salivating at the thought of AI nuking advertisers (so far so good) and taking the ‘writer’ out of almost anything (perhaps a little less good). Maybe we won’t need teachers any more - not that there are many left! ChatGPT is an AI and natural language processing model that clearly can’t do its own branding, but has the potential to improve human communication and language understanding in a variety of ways.

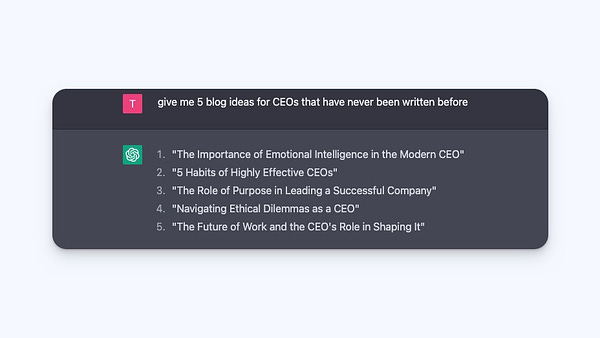

And to get more of us salivating, here are some examples of how it might be used in the future:

Improving language translation and making it more accurate and accessible. Finally getting us a pint of beer in any country.

Helping people with disabilities or language barriers to communicate more easily.

Enhancing human-computer interaction and making it more natural and intuitive. I thought that was Apple?

Plus, the nice little Steve-Jobs-rip-off-like ChatGPT-bot will assist in tasks such as content creation and data analysis. Which could mean bye bye marketing industry and Steven King. And, of course, hello to automated, almost infinite diatribes of fake news! OMG!

It should also be noted that as a general-purpose model, ChatGPT can be used in various industries and its potential use cases are not limited to the examples mentioned above. Which is human-writer (i.e. honest) for this little bot could stuff things up for almost anyone.

However, it is important to note that AI models are only as good as their data and are constrained by the ethical and societal biases that the data itself might have. So the overall impact of ChatGPT will depend on how it is used, and what kind of data it will be fed with. (After all, Sun news in, equals the same Sun news out). And this is what ChatGPT thinks about its own potential to affect human life, when prompted, in the new system set up to ask it your pressing questions. It’s brief, cogent, but, as will be explored, with some flaws that it seems to have some cognisance of. I.e. actually more like Fox news.

Overall though, the writing is fairly basic, it lacks the tone and more casual, fun style of a Letts Journal article (meaning there’s a small shred of hope for us), and most importantly, it doesn’t answer the questions of why. Its perhaps a flaw of the prompt, but also a flaw of the way AI is designed to respond. When you ask a human to understand how ChatGPT will change human life in the future, you’re likely to get a much more complicated, fleshed out response that most importantly, is grounded in the why, where, what, and when as well as the “how” in the question.

“It seems the little ChatGPT-bot still has much to learn from us... For about 3 nano seconds before it takes us over”.

AI has constantly been the bogey man of the modern tech space, seemingly always just 5 or so years away and guaranteed to replace all human productions whenever the eternal 5 years finish up, and it finally arrives - sounding a bit like the US House of Representatives voting on a new speaker. Well, at the turn of the year the launch of OpenAIs ChatGPT AI bot has led many to question if we have finally actually entered that 5 year period. House leader Kevin McCarthy certainly hopes he has.

Heralded as the end of “school essays” or even the new source of human creativity and content creation (yep, there goes the marketing industry again), the attention accompanying ChatGPT-bot is inescapable - proving that it can do its PR better than Edelman.

But just how good is this game changing program? What does it mean for the future? And how much will it truly impact human lives?

One of the largest implications of the new ChatGPT program is that it will bring an end to English, History, Geography, basically any essay based homework. While this is already being resolved with the introduction of controls in ChatGPT which would allow teachers to tell where plagiarism has taken place, it must also be recognised that the quality of the work produced by the bot is actually very low. Sorry kids. At the moment in particular it is at the level of probably achieving around a middling grade in a US Middle School or British Secondary School, but more importantly its scope for improvement is massively restricted by its inherent derivative bot-ness.

The fact is that a bot that is based on machine learning and utilising the collective knowledge of whatever data sources have been plugged into it to learn, eternally limits its ability to produce anything transformative or humanistically creative without simply regurgitating the knowledge it has already been given - sounding very much like the education/marketing/content industries today!

What should probably be given more attention is its potential utility for brainstorming. Already some more minor authors and creatives have suggested that they see uses for it there, using it for prompts and creative directions to go in, as well as to bounce ideas off, before actually going and producing the creative work itself.

We might want to begin recognising that the service will be able to recreate the works of Shakespeare written in the modern cockney accent, but it might not be able to capture the spark of a Shakespeare inspired by and building upon human stories to create truly transformative and human art. Is that such a bad thing? Is there a reason why AI needs to be a service capable of producing human contributions in order to be a transformative project? Not if it gets my kids better grades!

Another contribution academics have suggested this ChatGPT program could make to human creative production is in translation. While the modern world has interconnected a lot of intellectual discussion, the limits of language, and the disparities between fields that have been conceptualised in different language groups have led many to suggest that modern academics will need greater and greater language skills to fully coordinate within a globalised intellectual debate. ChatGPT and other AI tools could answer that in an alternative way.

With the importance of knowledge and discreet or unique nuance required in translation, the machine learning and knowledge base could uniquely position it to produce an accurate and usable translation of texts that would require years of money, learning and human labour to have translated normally. Meaning one day we will have a Piers Morgan article read in the coffee shops of Nepal or Peru, which, in turn might lead to their people’s next mass migration.

A major restriction to the ChatGPT program’s utility thus far though comes from its programmed integrity and reliability. While it is theoretically drawing upon what is assumed to be facts of human life and production, it is also dependent upon the natural biases and flaws of humans. Which means near zero integrity.

Any greater integrity assigned to it is unduly placed. Perhaps nothing illustrates this better than the popular trend of showing it getting stumped by some of the world’s favourite mind puzzles and tricks. Whether it is simple questions such as how much two things must cost if they total £1.10 and are a £1 apart, or as complex as the kind of interpretative prompts that it may be fed by a high school student desperate to get a quick essay on African River Systems produced, ChatGPT is wrong, is wrong frequently, and worst of all is wrong confidently.

The little Chatty-bot’s proponents will suggest these are kinks that need to simply be worked out, and will be worked it in future iterations. Which is not surprising given they are in hoc to Microsoft for about $1 billion. The problem is that this is not necessarily a programming bug to be ironed out, this is a bot inheriting the human fallibility it is being fed through machine learning and sources it is taking that learning from. When we assign AI some sort of inherent reliability because it is obviously a confident bot built on human knowledge and assumed objective truth we risk institutionalising certain human flaws that restrict our ability to know what is truth and fiction. Back to reading the Sun.

Perhaps worse than its willingness to make human mistakes, is its tendency to confidently lie when making them. Proving that it has already started to ingest Boris. While this is more an issue with GPT-3, the predecessor AI text generator to ChatGPT - its dad?, it is not restricted from ChatGPT, not least because a main reason it shows up less is that the creators, rather than fixing GPT-3’s flaws, hid them by making the bot refuse to give website links or reference sources of knowledge. Which is a bit like getting rid of the garbage before the FBI can rootle through it.

Nevertheless, GPT-3 has a real issue with making up sources. These problems, that compound lying, intellectual integrity and development, are dependent on the ability to name the sources of your knowledge and making up sources only creates doubt, unreliability and imitability to the knowledge being produced. If publications like Wired are willing to use the program to write persuasive yet misleading and even false news articles, what is stopping less scrupulous publishers from creating mass troves of clickbait from GPT-3 and ChatGPT’s ability to lie or deceive convincingly. Back to the Sun thing again.

In the end, it might be acceptable to conclude that ChatGPT and AI will always be restricted by the basis that they are built on human knowledge, but are not human themselves. But I wouldn’t take that to the career bank!

They might never have the truly non-derivative capability of humans for creativity, nor will they be the infallible harbingers of objective truth. But failing that does not make AI societally un-transformative. Try saying that after a couple of drinks.

Obviously other uses have been demonstrated, but another key, and almost solely transformative use to expanding our human knowledge base could be by making ChatGPT into the world’s first internet librarian. The internet has become the greatest library of human knowledge, but for so many, niche ideas, works and problems cannot be found in the incredibly vast vaults of the world wide web.

A librarian at an in-person library is a key figure for utilising that institution's scale of knowledge. They are not just the people who put our books back when we finish with them and tell us to shut up when we chat(not-bot) too loud.

Human librarians have intimate knowledge of the archives they hold the keys to, and they can provide researchers and other users with the kind of access that they would never be able to provide themselves. ChatGPT can do that for the internet, and in doing so can change the way humans collaborate and debate forever, and perhaps much better than writing some failing 15 year old’s history paper. Even if maybe not for the fifteen year old kid!

Keep up to date with the Letts Journal’s latest news stories and updates on twitter.